Agile Maturity Assessment: Why It Often Fails

For several years now, I have been speaking almost every week with Heads of Agile Transformation and Leads from Agile Centers of Excellence. By now, there have been well over 100 conversations.

And do you know what I hear over and over again? Many companies already have Agile maturity assessments. They conduct surveys, check Jira data, observe DORA metrics. Sometimes the results are even presented neatly. But measures that actually improve the team’s everyday life? That is exactly where it often fails.

What is an Agile Maturity Assessment?

For me, an Agile maturity assessment is a structured assessment of the current situation. It shows how consistently agile principles are lived in daily collaboration and how well teams generate real results from them.

The point is: It’s not about a pretty maturity level number. It’s about better decisions and effective next steps.

If you are looking for practical moderation ideas for this, you will find many directly usable formats in our overview of retrospective methods and in the guide to retrospective check-ins .

Why Agile maturity assessments are important

When I set up assessments properly, they help me to:

- make the current maturity level transparent

- make systemic bottlenecks visible

- clearly prioritize improvements

- make progress measurable over time

- generate real change from discussions

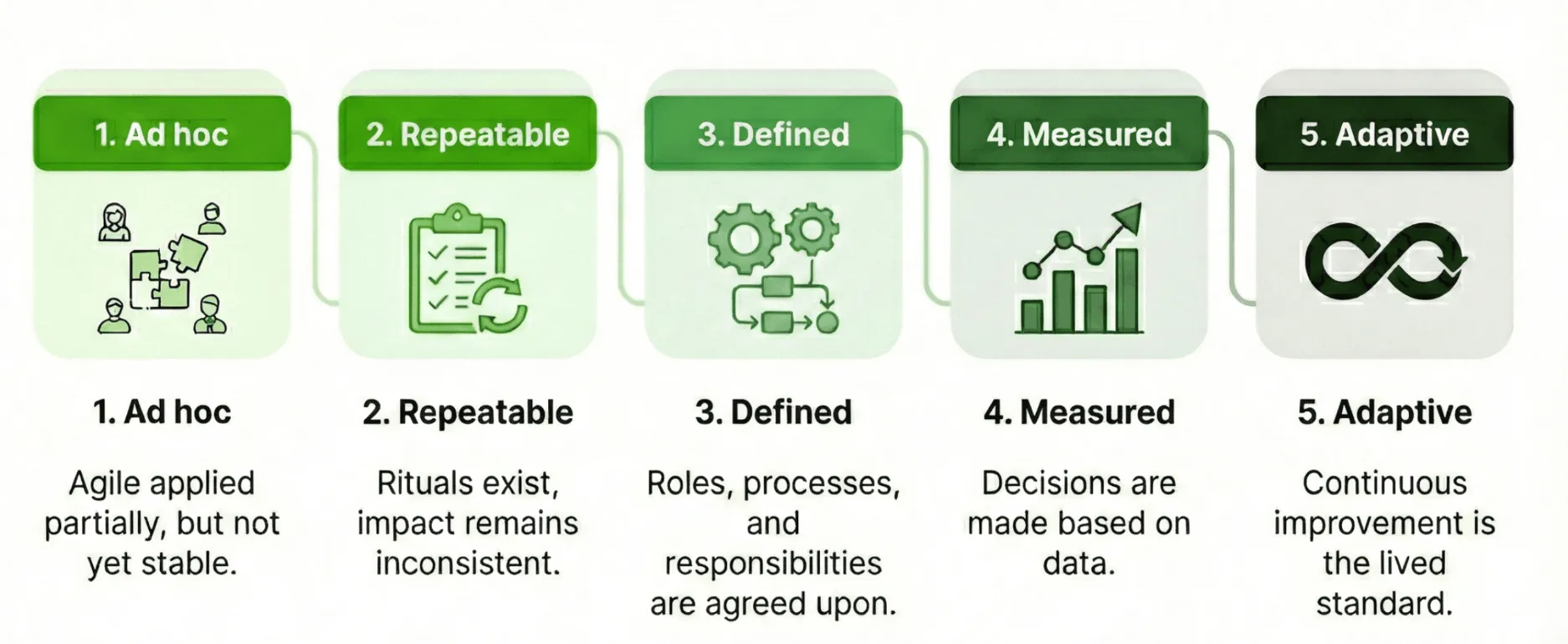

A simple maturity model in 5 stages

In practice, I often use a clear 5-stage model:

- Ad hoc: Agile is applied selectively, but is not yet stable.

- Repeatable: Rituals are present, impact remains inconsistent.

- Defined: Roles, processes, and responsibilities are aligned.

- Measurable: Decisions are made based on data.

- Adaptive: Continuous improvement is a lived standard.

Important: The goal is not to reach level 5 quickly. The goal is the next sensible step with high impact.

How I measure agile maturity in a meaningful way

I always combine three perspectives:

- Survey data: Perception of collaboration, clarity, and focus

- Delivery and DORA metrics: e.g., lead time, stability, change quality

- Qualitative evidence: Patterns from retrospectives, interviews, and blockers

Only this combination provides a realistic picture.

For teams that specifically want to work with the model, I have also prepared the background information in the article on the Spotify Health Check .

The concrete start: Spotify Squad Health Check Radar

This is exactly where I use the Spotify Squad Health Check Radar from our Echometer library. The format helps teams to reflect on their collaboration in a differentiated way, instead of just discussing a gut feeling.

I use the template in a simple process:

- Team answers the health check items anonymously on a scale.

- We look at the radar together and mark the largest deviations.

- We prioritize a maximum of 1 to 3 improvement levers for the next cycle.

- We define owner, deadline, and success criterion.

This exact step determines the impact: I have described specifically how you turn insights into resilient to-dos in the article on retrospective measures with tips and examples .

Spotify Squad Health Check Radar: How the retro works

-

Random Icebreaker (2-5 minutes)

Echometer provides you with a generator for random check-in questions.

-

Review of open actions (2-5 minutes)

Before starting with new topics, you should talk about what has become of the measures from past retrospectives to check their effectiveness. Echometer automatically lists all open action items from past retros.

-

Health Check

All team members can answer the health checks anonymously on a scale. Then go through the results of the health checks together and record any additional comments if necessary. If you use the same health checks in several retrospectives, you can also track trends over time in Echometer.

- We regularly deliver value to our users.

- Our technical quality supports fast changes.

- We work together as a team in a trusting and transparent manner.

- Our focus is clear and priorities are stable.

- We learn systematically from mistakes and experiments.

-

Discuss retro topics

Use the following open questions to collect your most important findings. First, everyone does it themselves, covered. Echometer allows you to reveal each column of the retro board individually in order to then present and group the feedback.

- Which dimension has improved the most since the last measurement?

- Where do we currently see the biggest bottleneck and why?

- Which 1 to 3 measures will we implement bindingly by the next retro?

-

Catch-all question (Recommended)

So that other topics also have a place:

- What else would you like to talk about in the retro?

-

Prioritization / Voting (5 minutes)

On the retro board in Echometer, you can easily prioritize the feedback with voting. The voting is of course anonymous.

-

Define actions (10-20 minutes)

You can create a linked action via the plus symbol on a feedback. Not sure which measure would be the right one? Then open a whiteboard on the topic via the plus symbol instead to brainstorm root causes and possible measures.

-

Checkout / Closing (5 minutes)

Echometer enables you to collect anonymous feedback from the team on how helpful the retro was. This creates the ROTI score ("Return On Time Invested"), which you can track over time.

Spotify Squad Health Check Radar

Health Check Questions (Scale)

Open questions

Why Echometer is the best starting point for Agile Health Checks

From my perspective, Echometer is the best entry point because implementation is directly integrated into the concept:

- Quick start: Teams can begin with a structured process without a steep learning curve.

- Template library with substance: The Spotify Squad Health Check Radar is ready for immediate use.

- Measurable instead of felt: Trends over time make development visible.

- Focus on implementation: Measures are documented and tracked.

- Scalable: Insights can be evaluated across multiple teams.

This turns an assessment from a report for the drawer into a management tool for real improvement.

If you want to start right away, take a look at our Team Health Check Software or at the Team Retrospective Software .

Best practices for effective assessments

- few, business-relevant dimensions instead of overloaded questionnaires

- clear priorities per cycle instead of too many parallel initiatives

- binding responsibilities for each measure

- short review cycle of 2 to 4 weeks

- transparent tracking over several iterations

If you want to strengthen your facilitator setup for this, you will find additional practical impulses in our eBook on retro moderation and in the best retrospective games online .

Conclusion

Agile maturity assessments rarely fail because of the measurement itself. They fail because the measurement is not followed by consistent implementation.

If you combine assessments with a clear model, a concrete template like the Spotify Squad Health Check Radar and a clean implementation process, “we have surveyed” finally becomes “we have improved”.